💡 Introduction

In large analytics organizations, data reliability often fails silently—not because of big outages, but because of unseen schema shifts. A single renamed column or missing field can ripple across dashboards, models, and pipelines without anyone noticing until it’s too late.

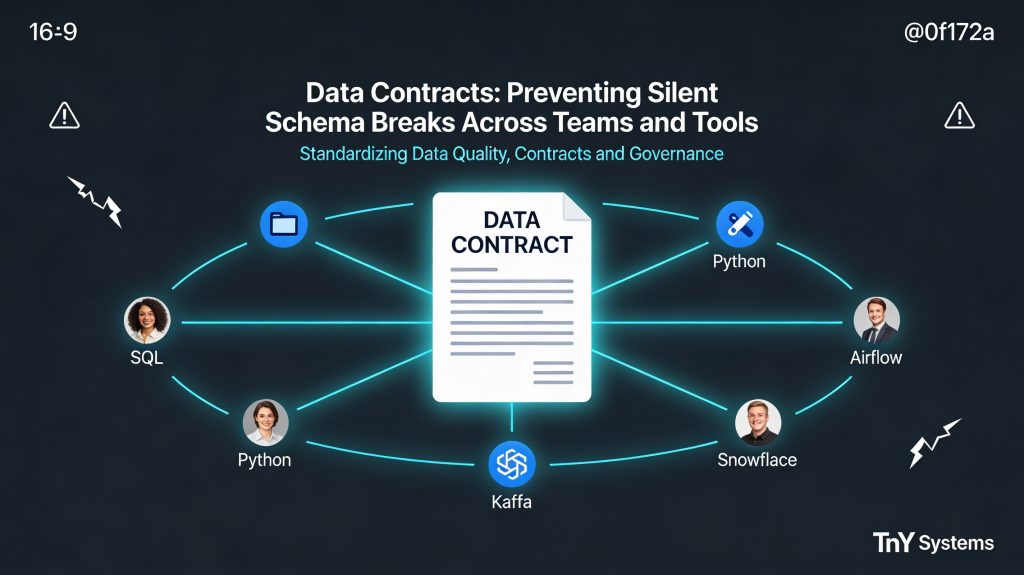

That’s why data contracts have emerged as a cornerstone of modern data platform design. For data leaders tackling ownership, observability, and governance challenges, contracts create technical and organizational alignment. They define what “good data” looks like, how change happens safely, and where accountability sits when something breaks.

Teams focused on reliability in 2025 use data contracts as the backbone of schema governance and data quality SLAs across their platforms.

🔍 What Are Data Contracts?

A data contract is a formal, machine‑readable agreement defining expectations between data producers and consumers.

It codifies:

- Schema definitions (names, types, formats)

- Acceptable changes and compatibility rules

- Data quality thresholds and SLAs

- Ownership metadata for accountability

Think of it as an API contract — but for data.

Just like APIs prevent software regressions through well-defined interfaces, data contracts prevent accidental regressions in data pipelines.

🧠 Quick insight: Without data contracts, modern data teams rely on conventions and Slack messages for schema governance — an unreliable combination at scale.

🧩 Why Silent Schema Breaks Happen

Silent schema breaks occur when upstream teams modify data structures without adequately signaling the change downstream.

Common examples:

❌ Renaming or deleting a column used in reports

❌ Changing data types that affect joins or aggregations

❌ Altering timestamp formats that break parsing logic

When ownership is unclear, even mission‑critical datasets can lose integrity overnight. These incidents lead to broken dashboards, failed models, and slow root cause analysis — all avoidable with clear data contract policies.

⚖️ Schema Governance and Backward Compatibility

Modern schema governance relies on two core principles: transparency and compatibility.

Transparency means every schema change must be versioned, reviewed, and communicated.

Backward compatibility ensures new changes don’t disrupt existing consumers.

Best practices for maintaining both:

- 📜 Version schemas like software — tag and publish contract artifacts.

- 🔄 Add columns instead of renaming or removing.

- 🧪 Test compatibility on staging replicas before production rollout.

- 📣 Automate contract validation in CI/CD pipelines for data jobs.

These guardrails transform governance from policy into automation, enabling teams to ship faster while staying compliant.

📊 Data Quality SLAs and Ownership

Contracts aren’t just about structure — they also define behavior.

Data quality SLAs provide measurable assurances. For example:

- 99.9% non-nullness for key business identifiers

- Column cardinality thresholds to detect value drift

- Time‑based freshness guarantees for streaming pipelines

💬 Organizational Outcome: Data contracts make ownership explicit. Producers know what they must guarantee. Consumers trust what they use. Reliability stops being a firefighting exercise and becomes an ongoing practice.

🔬 Column‑Level Lineage and Observability

The next frontier for effective contracts is column‑level lineage — mapping how specific fields flow from systems to warehouses to dashboards.

Column‑level lineage enhances traceability by connecting each piece of data to its upstream source contract. When paired with observability tools, this provides:

- Rapid root cause analysis for schema breaks

- Clear visibility into impacted downstream assets

- Audit‑ready documentation for governance and compliance reports

Modern data catalogs and lineage engines are evolving to integrate data contracts directly, closing the loop between metadata and real‑time operations.

🧠 Implementation Blueprint

For teams solving reliability and ownership problems, here’s a structured rollout path:

- Define your core datasets and assign producers/owners.

- Adopt a schema registry (e.g., Kafka Schema Registry or OpenMetadata).

- Version schemas and publish them as reusable contract definitions.

- Automate contract validation within CI/CD pipelines.

- Store metrics for SLA compliance and notify stakeholders on violations.

This operational layer ensures contracts are living system assets — not static documents.

🏁 Conclusion

In 2025, data contracts are transforming data platforms from reactive systems into reliable, governed infrastructures. They are the foundation for schema governance, backward compatibility, and repeatable data quality SLAs.

The result?

Less downtime, clearer accountability, and faster delivery cycles.

With contracts, data becomes trustworthy by design — a shared product, not a fragile artifact.